KAI & CURAI

Grief Counseling and Artificial Intelligence

Kai is a conversational AI within the context of loss and bereavement.

Team

With Anukriti Kedia and Josh LeFevre

Time

6 weeks in 2018

Role

Design Research, Concept Development, 2D/3D Prototyping, Interaction Design, Visual Design

Tools

Sketch, Principle, Cinema 4D, After Effects

Project Objectives

Our challenge was to examine the poetics of interaction in the context of AI counseling, exploring it from the perspective of both clients and therapists.

The use of AI continues to grow as it offers efficient solutions to problems facing people and businesses, and quick ways of accessing relevant information. However, it’s use is now also expanding beyond task oriented interactions into those that are imprecise, abstract and emotional.

“For users, conversational AI offers for the first time a means to interact with technology using their own words. For technology to understand them, not the other way around.” — Andy Peart, Chief Marketing & Strategy Officer at Artificial Solutions.

The Solution

A service that mediates between patients and counselors and supports conversations around mental health while prioritizing human connections instead of replacing them.

Kai aims to stimulate conversations that allow individuals to vocalize and reflect on their feelings of grief.

By acting as a mediator between grieving individuals and professional therapists, Kai extends into CURAI — an assistant for grief counselors.

Research Insights

We began the research process by exploring the possibilities and current limitations of artificial intelligence and counseling with a focus on interviewing counsellors, research papers, and market studies of existing products. Our exploratory questions included:

What are hallmarks of a good patient and therapy relationship?

What is the kind of support a client seeks during counseling?

What are the patterns in existing tools used in the context of counseling and technology?

What kind of information is pertinent to be reflected back to a patient when undergoing therapy?

Our research yielded these 4 insights:

Lack of Access to Mental Health Treatment

How can we provide a solution social, financial, and temporal constraints of therapy?

A key finding was the lack of professional care received by individuals suffering from mental health issues. This was marked by social stigma attached to mental health and therapy, high costs of treatment and a shortage of therapists to provide professional care to individuals. Approximately 56% of all Americans suffer form mental illness and do not receive treatment.

Grief Context

What does care look in the context of AI and grief?

While psychological help may be required for a range of mental health problems, we chose to focus on the context of grieving individuals and the baggage of processing the loss of a loved one. Research suggested that while grieving individuals look for support and comfort, they may find it hard to share these feelings with even their closest friends and family. This provided additional opportunity to explore role of "AI as an outsider".

AI-Counselor as an outsider

How can we leverage AI as a low threshold entry point for counseling?

Our team believes strongly that AI cannot replace human connection. However, it is interesting to note it’s role as an outsider. Studies conducted at CMU suggest that individuals display lower levels of self-restrain and find it easier to initiate conversations and speak to an AI, in comparison to human therapists. In addition, AI can provide a cost-effective solution to some of therapy’s financial and temporal constraints.

Gaps in current offerings:

How might we coalesce verbal and non-verbal interactions to improve conversational experiences in the context of therapy?

Our research on current AI therapists and voice assistants revealed several actionable opportunities. Most of the popular AI therapists on the market like Woebot and Replica presently build on a chat-based interface which does not fully leverage the potential of voice or visual interactions. On the other hand, voice assistants like Siri and Google Home which make use of verbal and visual feedback are constrained by a strong utilitarian focus on performing tasks while their visual language is limited by their functionality.

Design Objectives

After distilling our research into concrete insights we developed them further into four key design principles that guided our design process:

Care

A comprehensive and rich interpersonal engagement, that builds on the considered care required during grief counseling.

Calm

Evoke a sense of calm that puts users at ease and makes them feel understood, heard, and respected.

Simple

Reflect a sense of simplicity that doesn't overwhelm the user and builds on poetic visual feedback.

Trust

Use on a considered approach that upholds our shared values such as data security and transparency through all of its touchpoints.

Concept Development

Universal Metaphors for an Abstract Visual Form

Based on our research, we found visualizations of AI counselors to range on a wide spectrum of android-like robotic forms, abstract spinning dots as well as graphical renditions of human forms. We believed it was imperative to steer away from the uncanny valley of AI representations. We chose to build on an abstract visual representation that would feel approachable and relatable.

Artificial intelligence counselors — range of formal abstraction:

Replika

Woebot

Ellie VR

The metaphors of water and spherical entries provided a cross-cultural reference recognized universally as symbols of calmness and continuity. Water, understood across a range of cultures, symbolizes calmness, fluidity and clarity and the sphere acts as a symbol of continuity, healing and balance. Water as a material and the sphere as a concrete entity determined the visual metaphors of Kai's formal representation.

water

ripples

sphere

Colors of Calm and Care

Color theory can be subjective. Hence it was important to user test our color explorations in order to inform our process. Purple unanimously emerged as a color for care, recognized across cultures as a symbol for grief that worked well as the primary color. Pairing it with a warm yellow brought in a quality of calmness, that fit in well with our previously defined design objectives.

color explorations

A Human Voice Designed for Empathy

As voice designers, working in the context of a conversational AI, the tone of voice becomes a critical touch-point. In the past few years there has been an upsurge in the technology that brings in a humanistic quality to AI voices, which we seeked to leverage. A humanistic voice rendered with comfort and care brought in a warm balance in contrast to our abstract visual form, making a holistic experience which was built to soothe and comfort.

robotic

somewhat human

more human

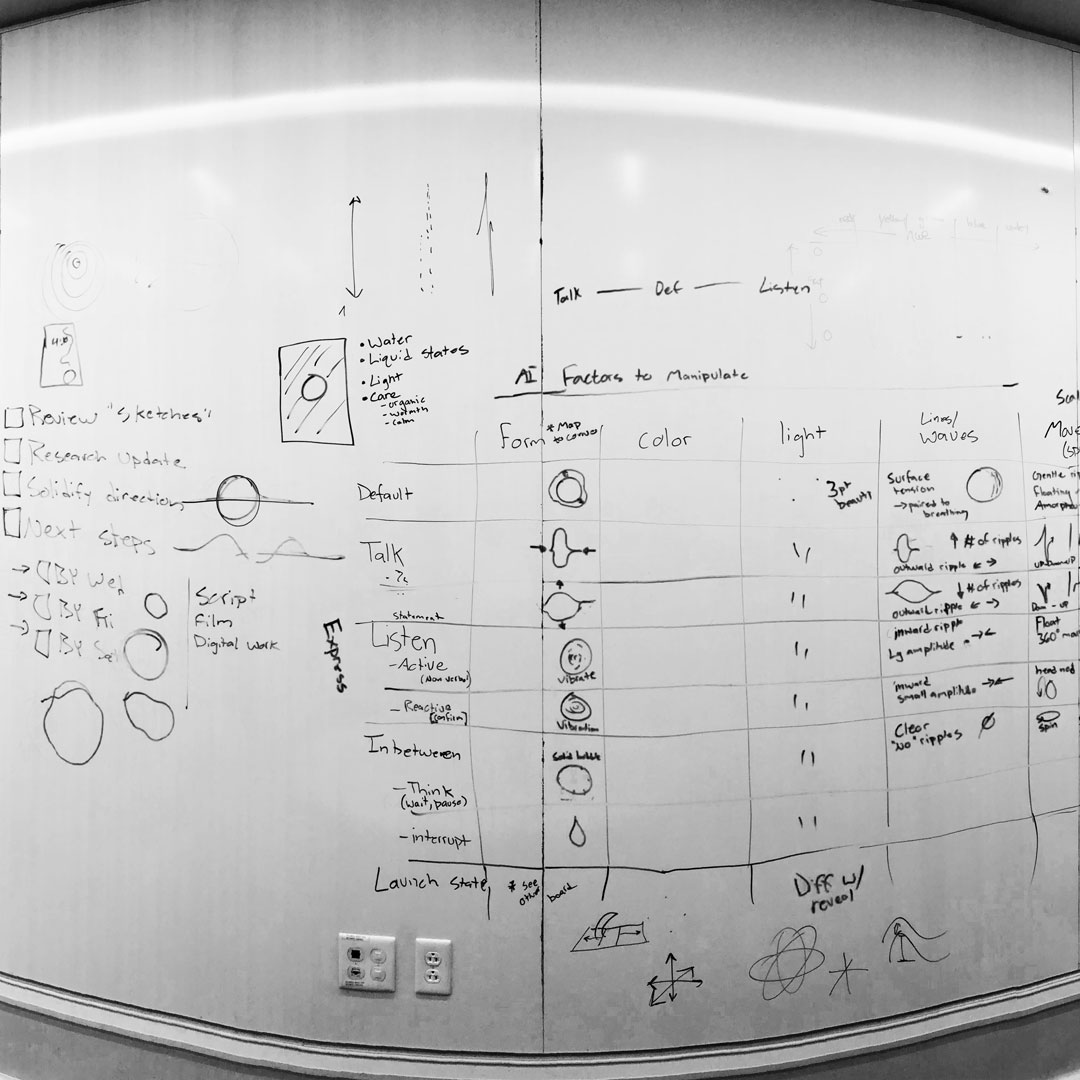

Motions for Non-Verbal Human Interactions

A key component of the motion design for us was to build on a vocabulary of non-verbal human interactions. This required us to push the traditional boundaries of listening and speaking states of conversational AIs to accommodate for pauses and prompts that are a part of our natural conversations. We studied the affordances of water as a material to experiment with variables of frequency, amplitude, motion and transparency. User testing our designs, we understood the importance of maintaining a symmetrical motion to maintain an approachable quality to the form.

The radial motion of ripples in water fit well to our approach and blended nicely into the two key Kai states — speaking (outward rippling) and listening (inward rippling) while a higher wave amplitude suggested a prompt and a lower amplitude a pause.

speaking: outward rippling

listening: inward rippling

pausing: lower rippling

prompting: higher rippling

Kai provides a low threshold entry point for grief therapy. While Kai is client facing, we needed to integrate our service into a counselor facing solution as well.

Counseling Research Insights

In order to understand the process of grief therapy, we reached out to counselors and experts on and off campus and generated four main insights.

Framework for Counseling

DAP – Data Assessment Plans

The DAPs framework is used by many therapists to keep track of a patient's progress at each appointment. Sessions are recorded in notes that usually follow the format of Data (what is being said by the patient), Assessment (the therapist’s assessment of the patient’s behavior) and Plans (setting of long-term and short-term plans from therapy sessions). The DAPs framework formed the basis of wireframing the counselor's dashboard.

Confidential Patient Information

Data Security

Confidentiality and trust are essential for a client-therapist relationship. Clinics and counselors prioritize local storing of patient data for security. It was imperative for us to uphold this value of trust through the design of the touchpoints.

Mapping Relationships

Genograms

Each individual’s life is built on a myriad of relationships. These relationships sometimes are key to understanding the psychological factors behind a patient’s current state of mind. We discovered an interesting graphical representation used by therapists as a way of mapping and analyzing detailed data of an individual’s relationships: Genograms. Genograms became a great starting point for our exploration of this type of data visualisation that is already very familiar to therapists.

Natural Language Processing

Transcribing

When asked about how AI might help in therapy, most counselors spoke strongly in favor of the need to transcribe the conversation from sessions. This insight informed a key feature of the dashboard, which would significantly improve the counselor’s experience during the session and allow them to focus on the patient's assessment.

Curai Design Objectives

In addition to the design principles that we determined at the beginning of the design process, our research on counselors paved way to a set of critical considerations that would inform CURAI’s design.

Integration

How do we integrate a range of functions and records into a comprehensive yet simple solution?

Data Permissions

How might we design a holistic system that works for different types of data sharing permissions given by patients?

Professional

How do we design a dashboard that reflects a professional aesthetic?

Trust & Security

How might we establish a sense of security and trust for the patients?

Data Visualization

How do we build a system of data visualisation that effectively and innovatively communicates both quantitative and qualitative data from a patient’s conversation history?

Curai Concept Development

Integrating Kai and Curai into a service system

user flow

main dashboard view wireframe

genogram/thematics wireframe

Kai Integration

To leverage the use of Kai’s voice interface we integrated it in the Curai platform as way to summarize the patient’s condition and as an easy access to data from the Kai sessions.

Dashboard Layout

We designed the main page with a focus on components that counsellors need to access right before or after a session. Building on the DAPs framework, the page represented data from the last session in form of quotes and assessment as well as plans in form of notes. Additionally, we designed a central timeline that used colors to distinguish between Kai (purple) and clinic sessions (mint) to allow therapists to easily scrub through any session of the past and to learn more about the client's progress.

Thematics

Building on genograms, we introduced the feature of thematics as an overview into a patient’s relationships. Using lexical and concordance formats to structure this data, we envisioned this as a map of most common subjects from the history of the conversation and their co-relations, distinguished as important people and most prevalent themes in the patient’s life. This would allows therapists to view patterns in patient’s relationships and how these topics were interconnected. We designed an interactive visualisation which depicts frequency of use through the size of circles, positive (green) or negative (red) relationships through the use of stroke colors, and saturation to represent the intensity of such relationships.

Access to Session Transcripts

Categorized under keywords, at each point the therapist has access to the relational quotes that were said and to the full transcripts from the corresponding sessions. Accommodating for any AI-error, this gave the therapists the agency to check and interpret the conversation for themselves.

Search

Since therapists may find the need to refer back to certain pieces of information, we integrated a search feature on the dashboard which would customize all information on the page to specific keywords to allow therapists to explore a subject in greater detail.

Mid-Session Notes

Using our insight for transcribing therapy sessions, we leveraged the orientation of the iPad screen from horizontal to vertical as a way to switch into note-taking mode during the session. Accessed through a simple swipe, the note-taking section is divided into separate pages for notes and plans. The function to record specific quotes is mapped to when the therapist writes down the (") symbol. These quotes along with other assessment notes and plans are then automatically updating on the dashboard at the end of the session.